World’s first remote mind control technology is developed in South Korea

By Matthew Phelan Senior

Posted on https://www.dailymail.co.uk

on July 22, 2024

A remote, ‘long-range’ and ‘large-volume’ mind control device has been unveiled in South Korea — with plans to use the tech for ‘non-invasive’ medical procedures.

Researchers with Korea’s Institute for Basic Science (IBS) developed the hardware, which manipulates the brain from a distance using magnetic fields, and tested the tech by inducing ‘maternal’ instincts in their female test subjects: mice.

In another test, they exposed a test group of lab mice to magnetic fields designed to reduce appetite, leading to a 10-percent loss in body-weight, or about 4.3 grams.

‘This is the world’s first technology to freely control specific brain regions using magnetic fields,’ according to the professor of chemistry and nanomedicine who helped spearhead the new effort.

‘We expect it to be widely used in research to understand brain functions, sophisticated artificial neural networks, two-way brain-computer interface technologies, and new treatments for neurological disorders,’ Dr Cheon said.

But despite the science fiction quality of remote mind control, health experts noted that magnetic fields have been used successfully in medical imaging for decades.

‘The concept of using magnetic fields to manipulate biological systems is now well established,’ Dr Felix Leroy, a senior scientist at Spain’s Instituto de Neurociencias, wrote in an op-ed that accompanied the new study in Nature Nanotechnology.

‘It has been applied in various fields,’ he noted, ‘magnetic resonance imaging [MRI], transcranial magnetic stimulation, and magnetic hyperthermia for cancer treatment.’

The novelty added by South Korea’s IBS team was the genetic fabrication of specialized nanomaterials, whose role within neurons in the brain could be tuned from afar via carefully selected magnetic fields.

The technique, formally called magneto-mechanical genetics (MMG), guided Dr Cheon and his colleagues as they developed their brain-modulating technology.

In the new study, published this July in Nature Nanotechnology, the team called their invention Nano-MIND, for ‘Nano-Magnetogenetic Interface for NeuroDynamics.’

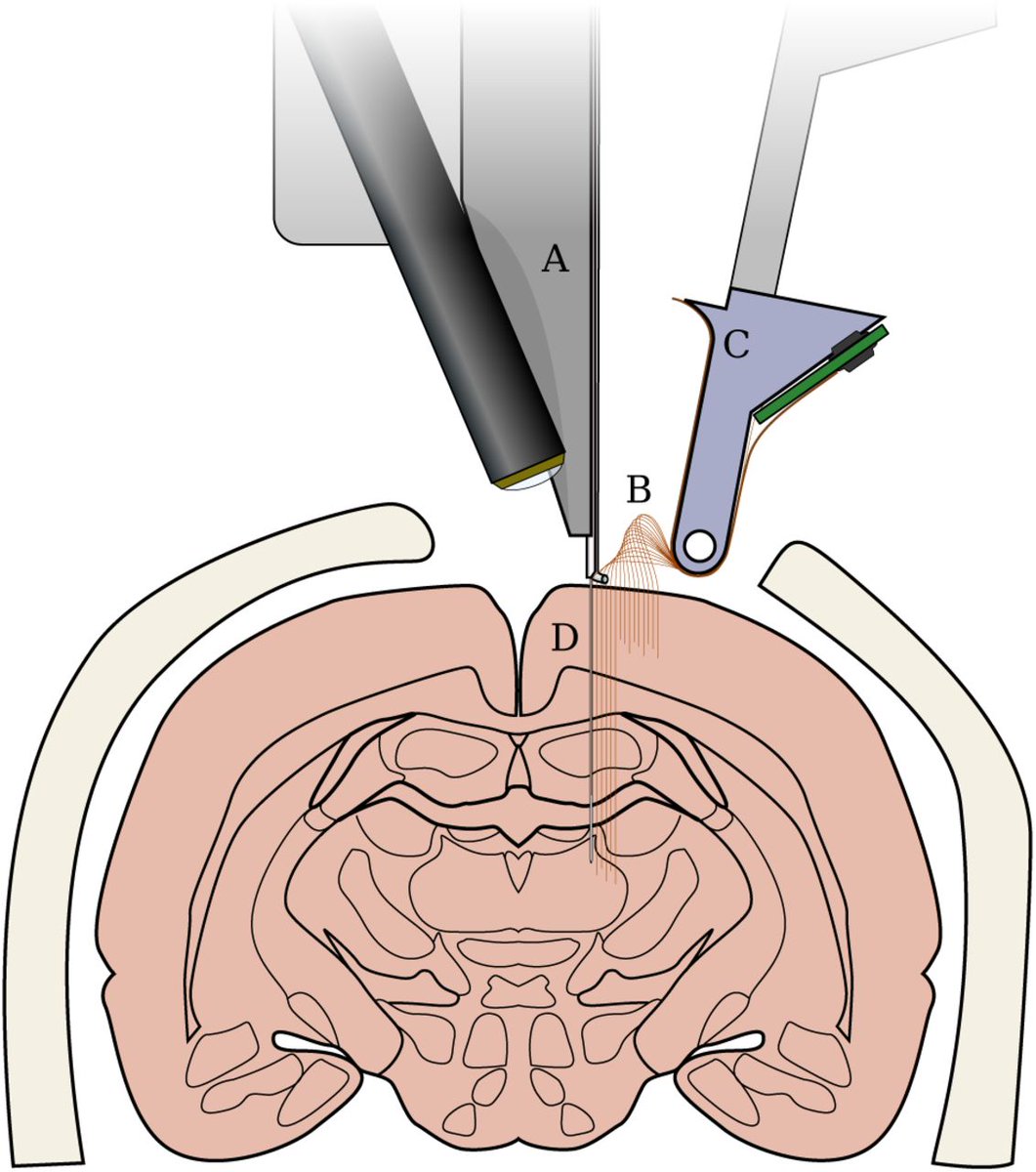

Dr Cheon Jinwoo, director of South Korea’s IBS Center for Nanomedicine, said he expects the new hardware to be used for a variety of healthcare applications where it is sorely needed. Above a diagram of the magnetic device in which the study’s lab mice were remote controlled

In the group’s test of maternal instincts, the magnetic stimulation of certain female lab rats encouraged them to locate and collect their lost rat ‘pups’ more quickly in a maze-like course. The stimulated female rats began approaching the pups faster – on average 16 seconds faster

The scientists’ designed special mice for their experiments using a gene-replacement technique known to researchers as Cre-Lox recombination.

These genetically engineered lab mice developed more magnetically sensitive ‘ion channels’ which act as gates in their neurons, or nervous system cells, allowing certain molecules and atoms to enter at certain times and certain rates.

In the group’s test of maternal instincts, the MMG stimulation of certain female lab rats encouraged them to locate and collect their lost rat ‘pups’ more quickly in a maze-like course.

The female rats stimulated by Nano-MIND began approaching the pups faster — on average 16 seconds faster — and ‘quickly retrieved all the three pups in all the trials,’ the researchers wrote.

The team also conducted a two-week experiment with control group and experimental group mice on how these genetically engineered animals would react to Nano-MIND magnetic impulses encouraging them to eat more or eat less.

The technology proved capable of encouraging the mice both to overeat and to undereat.

In the experiment in which the MMG signal was encouraged the mice to eat, their body weight increased by approximately 7.5 grams on average, meaning roughly an 18-percent gain in body-weight.

The fasting magnetic impulse led to mice losing less (10-percent loss in body weight or about 4.3 grams), but significantly did not slow down the mice or inhibit their ability to move.

‘Reduced feeding did not affect locomotion,’ they wrote, implying that the effect was purely on appetite and not otherwise handicapping the mice’s ability to operate.

The technology, Dr Cheon and his team wrote, will find its most immediate use in helping health researchers understand which parts of the brain and the rest of the neurological system are responsible for which moods and other behaviors.

But in his opinion piece on the Nano-MIND innovation and its gene-replacement aspect, Dr Leroy in Spain cautioned against rushing too soon to human testing.

‘Further studies are needed to assess potential cumulative effects, including neuroadaptation or neurotoxicity,’ Dr Leroy advised.

–————————————————————————————————————————————————————————–

AI-supercharged Neurotech Threatens Mental Privacy: UNESCO

By

Posted on https://www.ibtimes.com

on July 13, 2023

The combination of “warp speed” advances in neurotechnology, such as brain implants or scans that can increasingly peek inside minds, and artificial intelligence poses a threat to mental privacy, UNESCO warned on Thursday.

The UN’s agency for science and culture has started developing a global “ethical framework” to address human rights concerns posed by neurotechnology, it said at a conference in Paris.

Neurotechnology is a growing field seeking to connect electronic devices to the nervous system, mostly so far to treat neurological disorders and restore movement, communication, vision or hearing.

Recently neurotechnology has been supercharged by artificial intelligence algorithms which can process and learn from data in ways never before possible, said Mariagrazia Squicciarini, a UNESCO economist specialising in AI.

“It’s like putting neurotech on steroids,” she told AFP.

Gabriela Ramos, UNESCO’s assistant director-general for social and human sciences, said that this convergence of neurotechnology and AI was “far-reaching and potentially harmful”. “We are on a path to a world in which algorithms will enable us to decode people’s mental processes and directly manipulate the brain mechanisms underlying their intentions, emotions and decisions,” she told the conference.

In May, scientists in the United States revealed they had used brain scans and AI to turn “the gist” of what people were thinking into written words — as long as they had spent long hours inside a large fMRI machine.

Later that month, billionaire Elon Musk’s firm Neuralink received approval to test its coin-sized brain implants on humans in the United States. Musk has said his ultimate goal is to ensure that humans are not intellectually overwhelmed by AI — though on Thursday he launched his own artificial intelligence company xAI.

Squicciarini emphasised that UNESCO was not saying that neurotechnology is a bad thing. “If anything it’s fantastic,” she said, pointing to how the technology could let blind people see again, or paralysed people walk.

But with neurotechnology “advancing at warp speed,” UN Secretary-General Antonio Guterres said that ethical guidelines were needed to protect human rights.

Investment in neurotech companies increased by 22 times from 2010 to 2020, rising to $33.2 billion, according to a new UNESCO report co-authored by Squicciarini.

The number of patents for neurotech devices doubled between 2015 and 2020, with the United States accounting for nearly half of all patents worldwide, the report said.

The neurotech devices market is projected to reach $24.2 billion by 2027.

–————————————————————————————————————————————————————————–

EXCLUSIVE: UN warns brain chips like Elon Musk’s Neuralink could be used as ‘personality-altering’ weapons — as FDA approves tech for human trials

By Matthew Phelan

Posted on https://www.dailymail.co.uk

On July 19, 2023

- UN hopes to ensure humanity’s ‘mental privacy, freedom of thought and free will’

- UN’s International Bioethics Committee called for protected ‘neurorights,’ ‘data privacy transparency’ and strategies to anticipate and prevent neurotech abuses

- IBC advised that engineers and programmers in neurotech should swear an oath like ‘the Hippocratic oath’ sworn to by doctors and other medical professionals

- READ MORE: Pentagon tests mood-altering ‘mind control’ AI chip on HUMANS

A United Nations panel has warned that brain chip technology being pioneered by Elon Musk could be abused for ‘neurosurveillance’ violating ‘mental privacy,’ or ‘even to implement forms of forced re-education,’ threatening human rights worldwide.

The UN’s agency for science and culture (UNESCO) said neurotechnology like Musk’s Neuralink, if left unregulated, will lead to ‘new possibilities of monitoring and manipulating the human mind through neuroimaging’ and ‘personality-altering’ tech.

UNESCO is now strategizing on a worldwide ‘ethical framework’ to protect humanity from the potential abuses of the technology — which they fear will be accelerated by advances in AI.

‘We are on a path to a world in which algorithms will enable us to decode people’s mental processes,’ said UNESCO’s assistant director-general for social and human sciences, Gabriela Ramos.

The implications are ‘far-reaching and potentially harmful,’ Ramos said, given breakthroughs in neurotechnology that could ‘directly manipulate the brain mechanisms’ in humans, ‘underlying their intentions, emotions and decisions.’

The committee’s warnings come less than two months after the US Food and Drug Administration (FDA) gave Elon Musk’s brain-chip implant company Neuralink federal approval to conduct trials on humans.

UNESCO’s assistant director-general for social and human sciences, Gabriela Ramos, told a 1000-participant bioethics conference last Thursday that the convergence of neurotech and AI ‘decode people’s mental processes and directly manipulate the brain’

Above, Emily Cross, a professor of Cognitive and Social Neuroscience at the public research university ETH Zurich presented the UN bioethics committee’s key findings of the risks, promises, legal and ethical issues surrounding the new advances in neurotechnology

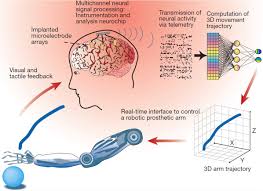

Neurotechnology like Musk’s Neuralink implants will connect the brain to computing power via thread-like electrodes sewn into to certain areas of the brain.

Neuralink’s electrodes will communicate with a chip to read signals produced by special cells in the brain called neurons, which transmit messages to other cells in the body, like our muscles and nerves.

Because neuron signals become directly translated into motor controls, Neuralink could allow humans to could control external technologies, such as computers or smartphones, or lost bodily functions or muscle movements, with their mind.

‘It’s like replacing a piece of the skull with a smartwatch,’ Musk has said.

But those communications pathways, as the UNESCO panel warned, cut both ways.

This May, scientists at the University of Texas at Austin revealed they were able to train an AI to effectively read people’s minds, converting brain data from test subjects taken via functional magnetic resonance imaging (fMRI) into written words.

UNESCO economist Mariagrazia Squicciarini, who specializes in artificial intelligence issues, noted that the capacity for machine learning algorithms to rapidly pull patterns out of complex data, like fMRI brain scans, will accelerate brain chips’ access to the human mind.

‘It’s like putting neurotech on steroids,’ Squicciarini said.

UNESCO convened a 1000-participant conference of its International Bioethics Committee (IBC) in Paris last Thursday and released recommendations Tuesday.

‘The spectacular development of neurotechnology as well as new biotechnologies, nanotechnologies and ICTs makes machines more and more humanoid,’ the IBC said in their report, ‘and people are becoming more connected to machines and AI.’

The IBC weighed numerous dystopian scenarios seemingly out of science fiction last week in their effort to get ahead of rapidly advancing threats to human ‘neurorights’ which have yet to even be codified under international law.

‘It is necessary to anticipate the effects of implementing neurotechnology,’ the UN panel noted. ‘There is a direct connection between freedom of thought, the rule of law and democracy.’

Among the IBC’s myriad ofrecommendations in its 91-page report, the committee called for wider transparency on neurotech research from industry and academia; as well as the drafting of ‘neurorights’ for inclusion into international human rights law.

The IBC report described the new tech in stark terms as a challenge to ‘some basic aspects of human dignity, such as privacy of mental life or individual agency.’

But UNESCO’s assistant director-general for social and human sciences, Gabriela Ramos, put the converging power of neurotechnology and AI into even starker terms.

Neuralink co-founder Elon Musk has likened the surgical procedure to ‘replacing a piece of the skull with a smartwatch,’ as the device connects to the brain, but rests on the scalp. Above, images from a primate experiment made public at a November2022 Neuralink Show and Tell

–——————————————————–

–——————————————————–

Reuters reported last December that the United States Department of Agriculture’s Inspector General has been investigating potential violations of the Animal Welfare Act at Neuralink, which governs how researchers are permitted to treat certain types of animals during tests

While Elon Musk’s plans for Neuralink have focused on the device’s potential health benefits — helping to cure a range of conditions from obesity and autism to depression and schizophrenia — US federal regulators have focused instead on its capacity for harm.

As reported by Reuters last December, the United States Department of Agriculture’s Inspector General has been investigating, at the request of a federal prosecutor, potential violations of the Animal Welfare Act at Neuralink.

The federal law governs how researchers are permitted to treat certain types of animals, within medical studies and other kinds of scientific experiments.

Neuralink has been the subject of congressional scrutiny as well, with US lawmakers urging regulators this past May to investigate whether the panel that oversees animal testing at Neuralink has contributed to botched and rushed experiments.

Neuralink’s recent FDA approval came after Musk has previously boasted that his medical device company would begin human trials for a human brain implant on at least four occasions since 2019.

Over the course of those four years, the FDA had rejected several Neuralink applications for human experimentation approval, most recently in early 2022, according to seven current and former employees who spoke to Reuters.

Musk has emphasized Neuralink’s potential to treat severe conditions such as paralysis and blindness, and occasionally more trivial applications, such as web browsing or telepathy.

But UNESCO’s IBC drew specific attention to the threat posed by ‘dual use’ AI brain chip technologies, which could easily be reprogrammed or retooled for less than benevolent applications on the human mind.

–——————————————————–

Amandeep Singh Gill, the Envoy on Technology for the United Nation’s Secretary-General, told UNESCO’s International Bioethics Committee on Thursday that industry must be ‘brought into this discussion early’ to reduce the potential for abuse of neurotech ‘into the future’

‘The dual-use argument, which was generally brought up by bio-conservatives to emphasize risks,’ the committee noted in their report, ‘is now being used by bio-progressives to justify some development methods.’

‘Measures must be in place to protect against neurotechnologies being open to dual use,’ according to the IBC, so the medically beneficial neurotech from becoming twisted by hackers, corporate profiteers or even authoritarian regimes.

‘Neurotechnology has the potential for tremendous benefits in health, education and social relationships, but also holds the potential to deepen social inequities, to harm individuals’ privacy and to provide methods of manipulating individuals,’ they said.

Most of the UNESCO conference’s speakers voiced strong support for the creation of an actionable framework on neurotech similar to UNESCO’s Recommendation on the Ethics of Artificial Intelligence.

As Gabriela Ramos, UNESCO’s Assistant Director-General for Social and Human Sciences, put it, ‘Groundbreaking developments in neurotechnology offer unprecedented potential. But we should remain aware of its negative impact if it is employed for malicious purposes.’

‘We must act now,’ she said, ‘to ensure it is not misused and does not threaten our societies and democracies.’

–————————————————————————————————————————————————————————–

Hearing of microwave pulses by humans and animals: effects, mechanism, and thresholds

by

Posted on https://pubmed.ncbi.nlm.nih.go

in June, 2007

Abstract

The hearing of microwave pulses is a unique exception to the airborne or bone-conducted sound energy normally encountered in human auditory perception. The hearing apparatus commonly responds to airborne or bone-conducted acoustic or sound pressure waves in the audible frequency range. But the hearing of microwave pulses involves electromagnetic waves whose frequency ranges from hundreds of MHz to tens of GHz. Since electromagnetic waves (e.g., light) are seen but not heard, the report of auditory perception of microwave pulses was at once astonishing and intriguing. Moreover, it stood in sharp contrast to the responses associated with continuous-wave microwave radiation. Experimental and theoretical studies have shown that the microwave auditory phenomenon does not arise from an interaction of microwave pulses directly with the auditory nerves or neurons along the auditory neurophysiological pathways of the central nervous system. Instead, the microwave pulse, upon absorption by soft tissues in the head, launches a thermoelastic wave of acoustic pressure that travels by bone conduction to the inner ear. There, it activates the cochlear receptors via the same process involved for normal hearing. Aside from tissue heating, microwave auditory effect is the most widely accepted biological effect of microwave radiation with a known mechanism of interaction: the thermoelastic theory. The phenomenon, mechanism, power requirement, pressure amplitude, and auditory thresholds of microwave hearing are discussed in this paper. A specific emphasis is placed on human exposures to wireless communication fields and magnetic resonance imaging (MRI) coils.

—————————————————————————————————————————————————————————

Behind NATO’s ‘cognitive warfare’: ‘Battle for your brain’ waged by Western militaries

by Ben Norton

NATO is developing new forms of warfare to wage a “battle for the brain,” as the military alliance put it.

The US-led NATO military cartel has tested novel modes of hybrid warfare against its self-declared adversaries, including economic warfare, cyber warfare, information warfare, and psychological warfare.

Now, NATO is spinning out an entirely new kind of combat it has branded cognitive warfare. Described as the “weaponization of brain sciences,” the new method involves “hacking the individual” by exploiting “the vulnerabilities of the human brain” in order to implement more sophisticated “social engineering.”

Until recently, NATO had divided war into five different operational domains: air, land, sea, space, and cyber. But with its development of cognitive warfare strategies, the military alliance is discussing a new, sixth level: the “human domain.”

A 2020 NATO-sponsored study of this new form of warfare clearly explained, “While actions taken in the five domains are executed in order to have an effect on the human domain, cognitive warfare’s objective is to make everyone a weapon.”

“The brain will be the battlefield of the 21st century,” the report stressed. “Humans are the contested domain,” and “future conflicts will likely occur amongst the people digitally first and physically thereafter in proximity to hubs of political and economic power.”

While the NATO-backed study insisted that much of its research on cognitive warfare is designed for defensive purposes, it also conceded that the military alliance is developing offensive tactics, stating, “The human is very often the main vulnerability, and it should be acknowledged in order to protect NATO’s human capital, but also to be able to benefit from our adversaries’s vulnerabilities.”

In a chilling disclosure, the report said explicitly that “the objective of Cognitive Warfare is to harm societies and not only the military.”

With entire civilian populations in NATO’s crosshairs, the report emphasized that Western militaries must work more closely with academia to weaponize social sciences and human sciences and help the alliance develop its cognitive warfare capacities.

The study described this phenomenon as “the militarization of brain science.” But it appears clear that NATO’s development of cognitive warfare will lead to a militarization of all aspects of human society and psychology, from the most intimate of social relationships to the mind itself.

Such all-encompassing militarization of society is reflected in the paranoid tone of the NATO-sponsored report, which warned of “an embedded fifth column, where everyone, unbeknownst to him or her, is behaving according to the plans of one of our competitors.” The study makes it clear that those “competitors” purportedly exploiting the consciousness of Western dissidents are China and Russia.

In other words, this document shows that figures in the NATO military cartel increasingly see their own domestic population as a threat, fearing civilians to be potential Chinese or Russian sleeper cells, dastardly “fifth columns” that challenge the stability of “Western liberal democracies.”

NATO’s development of novel forms of hybrid warfare come at a time when member states’ military campaigns are targeting domestic populations on an unprecedented level.

The Ottawa Citizen reported this September that the Canadian military’s Joint Operations Command took advantage of the Covid-19 pandemic to wage an information war against its own domestic population, testing out propaganda tactics on Canadian civilians.

Internal NATO-sponsored reports suggest that this disclosure is just scratching the surface of a wave of new unconventional warfare techniques that Western militaries are employing around the world.

Canada hosts ‘NATO Innovation Challenge’ on cognitive warfare

Twice each year, NATO holds a “pitch-style event” that it brand as an “Innovation Challenge.” These campaigns – one hosted in the Spring and the other in the Fall, by alternating member states – call on private companies, organizations, and researchers to help develop new tactics and technologies for the military alliance.

The shark tank-like challenges reflect the predominant influence of neoliberal ideology within NATO, as participants mobilize the free market, public-private partnerships, and the promise of cash prizes to advance the agenda of the military-industrial complex.

NATO’s Fall 2021 Innovation Challenge is hosted by Canada, and is titled “The invisible threat: Tools for countering cognitive warfare.”

…

NATO-backed Canadian military officials discuss cognitive warfare in panel event

An advocacy group called the NATO Association of Canada has mobilized to support this Innovation Challenge, working closely with military contractors to attract the private sector to invest in further research on behalf of NATO – and its own bottom line.

While the NATO Association of Canada (NAOC) is technically an independent NGO, its mission is to promote NATO, and the organization boasts on its website, “The NAOC has strong ties with the Government of Canada including Global Affairs Canada and the Department of National Defence.”

As part of its efforts to promote Canada’s NATO Innovation Challenge, the NAOC held a panel discussion on cognitive warfare on October 5.

The researcher, who wrote the definitive 2020 NATO-sponsored study on cognitive warfare, François du Cluzel, participated in the event, alongside NATO-backed Canadian military officers.

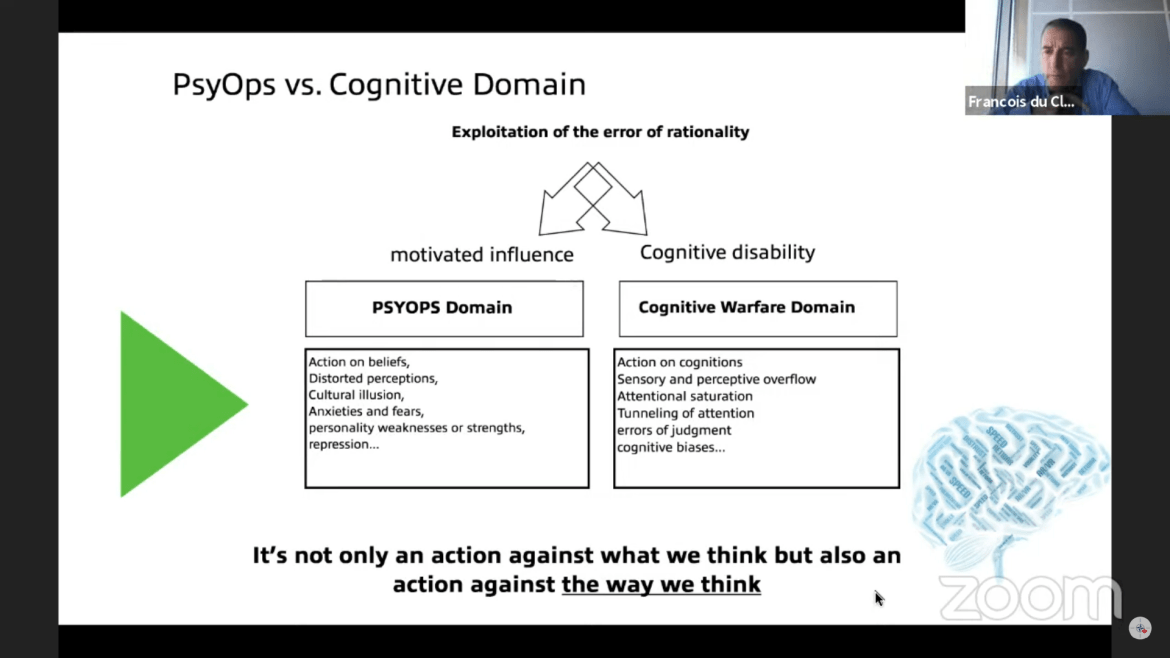

The October 5 panel on cognitive warfare, hosted by the NATO Association of Canada

The October 5 panel on cognitive warfare, hosted by the NATO Association of CanadaThe panel was overseen by Robert Baines, president of the NATO Association of Canada. It was moderated by Garrick Ngai, a marketing executive in the weapons industry, who serves as an adviser to the Canadian Department of National Defense and vice president and director of the NAOC.

Baines opened the event, noting that participants would discuss “cognitive warfare and new domain of competition, where state and non-state actors aim to influence what people think and how they act.”

The NAOC president also happily noted the lucrative “opportunities for Canadian companies” that this NATO Innovation Challenge promised.

NATO researcher describes cognitive warfare as ‘ways of harming the brain’

The October 5 panel, kicked off with François du Cluzel, a former French military officer who in 2013 helped to create the NATO Innovation Hub (iHub), which he has since then managed from its base in Norfolk, Virginia.

Although the iHub insists on its website, for legal reasons, that the “opinions expressed on this platform don’t constitute NATO or any other organization points of view,” the organization is sponsored by the Allied Command Transformation (ACT), described as “one of two Strategic Commands at the head of NATO’s military command structure.”

The Innovation Hub, therefore, acts as a kind of in-house NATO research center or think tank. Its research is not necessarily official NATO policy, but it is directly supported and overseen by NATO.

In 2020, NATO’s Supreme Allied Commander Transformation (SACT) tasked du Cluzel, as manager of the iHub, to conduct a six-month study on cognitive warfare.

Du Cluzel summarized his research in the panel this October. He initiated his remarks, noting that cognitive warfare “right now is one of the hottest topics for NATO,” and “has become a recurring term in military terminology in recent years.”

Although French, Du Cluzel emphasized that cognitive warfare strategy “is being currently developed by my command here in Norfolk, USA.”

The NATO Innovation Hub manager spoke with a PowerPoint presentation, and opened with a provocative slide that described cognitive warfare as “A Battle for the Brain.”

“Cognitive warfare is a new concept that starts in the information sphere, that is a kind of hybrid warfare,” du Cluzel said.

“It starts with hyper-connectivity. Everyone has a cell phone,” he continued. “It starts with information because information is, if I may say, the fuel of cognitive warfare. But it goes way beyond solely information, which is a standalone operation – information warfare is a standalone operation.”

Cognitive warfare overlaps with Big Tech corporations and mass surveillance, because “it’s all about leveraging the big data,” du Cluzel explained. “We produce data everywhere we go. Every minute, every second we go, we go online. And this is extremely easy to leverage those data in order to better know you and use that knowledge to change the way you think.”

Naturally, the NATO researcher claimed foreign “adversaries” are the supposed aggressors employing cognitive warfare. But at the same time, he made it clear that the Western military alliance is developing its own tactics.

Du Cluzel defined cognitive warfare as the “art of using technologies to alter the cognition of human targets.”

Those technologies, he noted, incorporate the fields of NBIC – nanotechnology, biotechnology, information technology, and cognitive science. All together, “it makes a kind of very dangerous cocktail that can further manipulate the brain,” he said.

Du Cluzel went on to explain that the exotic new method of attack “goes well beyond” information warfare or psychological operations (psyops).

“Cognitive warfare is not only a fight against what we think, but it’s rather a fight against the way we think, if we can change the way people think,” he said. “It’s much more powerful and it goes way beyond the information [warfare] and psyops.”

De Cluzel continued: “It’s crucial to understand that it’s a game on our cognition, on the way our brain processes information and turns it into knowledge, rather than solely a game on information or on psychological aspects of our brains. It’s not only an action against what we think, but also an action against the way we think, the way we process information and turn it into knowledge.”

“In other words, cognitive warfare is not just another word, another name for information warfare. It is a war on our individual processor, our brain.”

The NATO researcher stressed that “this is extremely important for us in the military,” because “it has the potential, by developing new weapons and ways of harming the brain, it has the potential to engage neuroscience and technology in many, many different approaches to influence human ecology… because you all know that it’s very easy to turn a civilian technology into a military one.”

As for who the targets of cognitive warfare could be, du Cluzel revealed that anyone and everyone is on the table.

“Cognitive warfare has universal reach, from starting with the individual to states and multinational organizations,” he said. “Its field of action is global and aim to seize control of the human being, civilian as well as military.”

And the private sector has a financial interest in advancing cognitive warfare research, he noted: “The massive worldwide investments made in neurosciences suggests that the cognitive domain will probably one of the battlefields of the future.”

The development of cognitive warfare totally transforms military conflict as we know it, du Cluzel said, adding “a third major combat dimension to the modern battlefield: to the physical and informational dimension is now added a cognitive dimension.”

This “creates a new space of competition beyond what is called the five domains of operations – or land, sea, air, cyber, and space domains. Warfare in the cognitive arena mobilizes a wider range of battle spaces than solely the physical and information dimensions can do.”

In short, humans themselves are the new contested domain in this novel mode of hybrid warfare, alongside land, sea, air, cyber, and outer space.

NATO’s cognitive warfare study warns of “embedded fifth column”

The study that NATO Innovation Hub manager François du Cluzel conducted, from June to November 2020, was sponsored by the military cartel’s Allied Command Transformation, and published as a 45-page report in January 2021 (PDF).

The chilling document shows how contemporary warfare has reached a kind of dystopian stage, once imaginable only in science fiction.

“The nature of warfare has changed,” the report emphasized. “The majority of current conflicts remain below the threshold of the traditionally accepted definition of warfare, but new forms of warfare have emerged such as Cognitive Warfare (CW), while the human mind is now being considered as a new domain of war.”

For NATO, research on cognitive warfare is not just defensive; it is very much offensive as well.

“Developing capabilities to harm the cognitive abilities of opponents will be a necessity,” du Cluzel’s report stated clearly. “In other words, NATO will need to get the ability to safeguard her decision making process and disrupt the adversary’s one.”

And anyone could be a target of these cognitive warfare operations: “Any user of modern information technologies is a potential target. It targets the whole of a nation’s human capital,” the report ominously added.

“As well as the potential execution of a cognitive war to complement to a military conflict, it can also be conducted alone, without any link to an engagement of the armed forces,” the study went on. “Moreover, cognitive warfare is potentially endless since there can be no peace treaty or surrender for this type of conflict.”

Just as this new mode of battle has no geographic borders, it also has no time limit: “This battlefield is global via the internet. With no beginning and no end, this conquest knows no respite, punctuated by notifications from our smartphones, anywhere, 24 hours a day, 7 days a week.”

The NATO-sponsored study noted that “some NATO Nations have already acknowledged that neuroscientific techniques and technologies have high potential for operational use in a variety of security, defense and intelligence enterprises.”

It spoke of breakthroughs in “neuroscientific methods and technologies” (neuroS/T), and said “uses of research findings and products to directly facilitate the performance of combatants, the integration of human machine interfaces to optimise combat capabilities of semi autonomous vehicles (e.g., drones), and development of biological and chemical weapons (i.e., neuroweapons).”

The Pentagon is among the primary institutions advancing this novel research, as the report highlighted: “Although a number of nations have pursued, and are currently pursuing neuroscientific research and development for military purposes, perhaps the most proactive efforts in this regard have been conducted by the United States Department of Defense; with most notable and rapidly maturing research and development conducted by the Defense Advanced Research Projects Agency (DARPA) and Intelligence Advanced Research Projects Activity (IARPA).”

Military uses of neuroS/T research, the study indicated, include intelligence gathering, training, “optimising performance and resilience in combat and military support personnel,” and of course “direct weaponisation of neuroscience and neurotechnology.”

This weaponization of neuroS/T can and will be fatal, the NATO-sponsored study was clear to point out. The research can “be utilised to mitigate aggression and foster cognitions and emotions of affiliation or passivity; induce morbidity, disability or suffering; and ‘neutralise’ potential opponents or incur mortality” – in other words, to maim and kill people.

The report quoted US Major General Robert H. Scales, who summarized NATO’s new combat philosophy: “Victory will be defined more in terms of capturing the psycho-cultural rather than the geographical high ground.”

And as NATO develops tactics of cognitive warfare to “capture the psycho-cultural,” it is also increasingly weaponizing various scientific fields.

The study spoke of “the crucible of data sciences and human sciences,” and stressed that “the combination of Social Sciences and System Engineering will be key in helping military analysts to improve the production of intelligence.”

“If kinetic power cannot defeat the enemy,” it said, “psychology and related behavioural and social sciences stand to fill the void.”

“Leveraging social sciences will be central to the development of the Human Domain Plan of Operations,” the report went on. “It will support the combat operations by providing potential courses of action for the whole surrounding Human Environment including enemy forces, but also determining key human elements such as the Cognitive center of gravity, the desired behaviour as the end state.”

All academic disciplines will be implicated in cognitive warfare, not just the hard sciences. “Within the military, expertise on anthropology, ethnography, history, psychology among other areas will be more than ever required to cooperate with the military,” the NATO-sponsored study stated.

The report nears its conclusion with an eerie quote: “Today’s progresses in nanotechnology, biotechnology, information technology and cognitive science (NBIC), boosted by the seemingly unstoppable march of a triumphant troika made of Artificial Intelligence, Big Data and civilisational ‘digital addiction’ have created a much more ominous prospect: an embedded fifth column, where everyone, unbeknownst to him or her, is behaving according to the plans of one of our competitors.”

“The modern concept of war is not about weapons but about influence,” it posited. “Victory in the long run will remain solely dependent on the ability to influence, affect, change or impact the cognitive domain.”

The NATO-sponsored study then closed with a final paragraph that makes it clear beyond doubt that the Western military alliance’s ultimate goal is not only physical control of the planet, but also control over people’s minds:

“Cognitive warfare may well be the missing element that allows the transition from military victory on the battlefield to lasting political success. The human domain might well be the decisive domain, wherein multi-domain operations achieve the commander’s effect. The five first domains can give tactical and operational victories; only the human domain can achieve the final and full victory.”

Canadian Special Operations officer emphasizes importance of cognitive warfare

When François du Cluzel, the NATO researcher who conducted the study on cognitive warfare, concluded his remarks in the October 5 NATO Association of Canada panel, he was followed by Andy Bonvie, a commanding officer at the Canadian Special Operations Training Centre.

With more than 30 years of experience with the Canadian Armed Forces, Bonvie spoke of how Western militaries are making use of research by du Cluzel and others, and incorporating novel cognitive warfare techniques into their combat activities.

“Cognitive warfare is a new type of hybrid warfare for us,” Bonvie said. “And it means that we need to look at the traditional thresholds of conflict and how the things that are being done are really below those thresholds of conflict, cognitive attacks, and non-kinetic forms and non-combative threats to us. We need to understand these attacks better and adjust their actions and our training accordingly to be able to operate in these different environments.”

Although he portrayed NATO’s actions as “defensive,” claiming “adversaries” were using cognitive warfare against them, Bonvie was unambiguous about the fact that Western militaries are developing these techniques themselves, to maintain a “tactical advantage.”

“We cannot lose the tactical advantage for our troops that we’re placing forward as it spans not only tactically, but strategically,” he said. “Some of those different capabilities that we have that we enjoy all of a sudden could be pivoted to be used against us. So we have to better understand how quickly our adversaries adapt to things, and then be able to predict where they’re going in the future, to help us be and maintain the tactical advantage for our troops moving forward.”

‘Cognitive warfare is the most advanced form of manipulation seen to date’

Marie-Pierre Raymond, a retired Canadian lieutenant colonel who currently serves as a “defence scientist and innovation portfolio manager” for the Canadian Armed Forces’ Innovation for Defence Excellence and Security Program, also joined the October 5 panel.

“Long gone are the days when war was fought to acquire more land,” Raymond said. “Now the new objective is to change the adversaries’ ideologies, which makes the brain the center of gravity of the human. And it makes the human the contested domain, and the mind becomes the battlefield.”

“When we speak about hybrid threats, cognitive warfare is the most advanced form of manipulation seen to date,” she added, noting that it aims to influence individuals’ decision-making and “to influence a group of a group of individuals on their behavior, with the aim of gaining a tactical or strategic advantage.”

Raymond noted that cognitive warfare also heavily overlaps with artificial intelligence, big data, and social media, and reflects “the rapid evolution of neurosciences as a tool of war.”

Raymond is helping to oversee the NATO Fall 2021 Innovation Challenge on behalf of Canada’s Department of National Defence, which delegated management responsibilities to the military’s Innovation for Defence Excellence and Security (IDEaS) Program, where she works.

In highly technical jargon, Raymond indicated that the cognitive warfare program is not solely defensive, but also offensive: “This challenge is calling for a solution that will support NATO’s nascent human domain and jump-start the development of a cognition ecosystem within the alliance, and that will support the development of new applications, new systems, new tools and concepts leading to concrete action in the cognitive domain.”

She emphasized that this “will require sustained cooperation between allies, innovators, and researchers to enable our troops to fight and win in the cognitive domain. This is what we are hoping to emerge from this call to innovators and researchers.”

To inspire corporate interest in the NATO Innovation Challenge, Raymond enticed, “Applicants will receive national and international exposure and cash prizes for the best solution.” She then added tantalizingly, “This could also benefit the applicants by potentially providing them access to a market of 30 nations.”

Canadian military officer calls on corporations to invest in NATO’s cognitive warfare research

The other institution that is managing the Fall 2021 NATO Innovation Challenge on behalf of Canada’s Department of National Defense is the Special Operations Forces Command (CANSOFCOM).

A Canadian military officer who works with CANSOFCOM, Shekhar Gothi, was the final panelist in the October 5 NATO Association of Canada event. Gothi serves as CANSOFCOM’s “innovation officer” for Southern Ontario.

He concluded the event appealing for corporate investment in NATO’s cognitive warfare research.

The bi-annual Innovation Challenge is “part of the NATO battle rhythm,” Gothi declared enthusiastically.

He noted that, in the spring of 2021, Portugal held a NATO Innovation Challenge focused on warfare in outer space.

In spring 2020, the Netherlands hosted a NATO Innovation Challenge focused on Covid-19.

Gothi reassured corporate investors that NATO will bend over backward to defend their bottom lines: “I can assure everyone that the NATO innovation challenge indicates that all innovators will maintain complete control of their intellectual property. So NATO won’t take control of that. Neither will Canada. Innovators will maintain their control over their IP.”

The comment was a fitting conclusion to the panel, affirming that NATO and its allies in the military-industrial complex not only seek to dominate the world and the humans that inhabit it with unsettling cognitive warfare techniques, but to also ensure that corporations and their shareholders continue to profit from these imperial endeavors.

—————————————————————————————————————————————————————————

Chile becomes first country to pass neuro-rights law

By Neeraja Seshadri | School of Law Christ U., IN

Posted on https://www.jurist.org

on October 2, 2021

Chile became the first country in the world to protect neuro-rights after the Chamber of Deputies approved Wednesday an amendment to the Chilean Constitution. The bill is expected to be signed into law by their president soon.

The amendment to the Chilean Constitution aims at defining mental identity for the first time in history as a non-manipulable right to protect it against technological advancements in neurosciences and artificial intelligence. The bill sets out to protect the right to mental privacy, personal identity, the free will of thought, equitable access to technologies that increase human capacities, and protection against discrimination.

Guido Girardi, the opposition senator, said, The amendment to the Chilean Constitution “is the first law in the world on neuro-rights, and it is the first step in a legislative ecosystem that will regulate artificial intelligence and neuro-technologies. ”

The Chamber of Deputies said in a statement that “Chile’s law establishes that scientific and technological development must be at the service of people and that it will be carried out with respect for life and physical and mental integrity.”

—————————————————————————————————————————————————————————

Researcher finds a better way to tap into the brain

By Robert C. Jones Jr.

Posted on https://news.miami.edu

on March 03, 2021

At first, the images were blurry and fragmented, as if someone were looking through a narrow window and seeing only part of a picture. But with each passing day, everything Khizroev looked at appeared clearer, sharper.

It wasn’t until his eyesight had fully been restored that Khizroev grew to appreciate just how intricate and complex the organ that controls it really is. “The CPU of the internet of the body,” said the University of Miami researcher in describing the human brain as the central processing unit. “If only we could tap completely into it and unlock all of its secrets.”

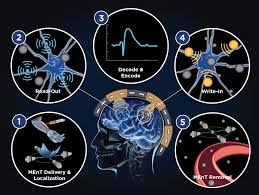

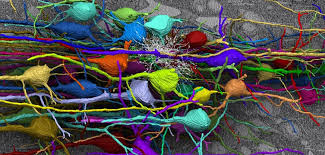

Today, Khizroev may be on the cusp of doing just that. Using a novel class of ultrafine units called magnetoelectric nanoparticles (MENPs), he and his research group are perfecting a method to talk to the brain without wires or implants. “Other efforts have used external instruments like microelectrodes to try to solve the mysteries of the brain, but because of its complexity and difficulty in accessing, such methods can only go so far,” said Khizroev, a professor of electrical and computer engineering at the University’s College of Engineering. “There are 80 billion neurons in the human brain, so imagine how difficult it would be to attach 80 billion microelectrodes to access every single neuron. The only way to truly tap in is wirelessly—through nanotechnology.”

So Khizroev and his research team plan to introduce millions of MENs intravenously into the body, allowing the particles, which are two thousand times thinner than a human hair, to move freely through the bloodstream and cross the protective blood-brain barrier, the filtering mechanism that prevents toxins and pathogens from reaching the brain while at the same time allowing vital nutrients to get through.

“Our brains are pretty much electrical engines, and what’s so remarkable about MENPs is that they understand not only the language of electric fields but also that of magnetic fields,” Khizroev explained. “Once the MENPs are inside the brain and positioned next to neurons, we can stimulate them with an external magnetic field, and they in turn produce an electric field we can speak to, without having to use wires.”

To extract the information in real time, his team would use a special helmet with magnetic transducers that send and pick up signals. The group’s research has all the elements of a great science fiction novel, but in this case, the work is fact, not concoction.

From targeted drug delivery for the treatment of neurodegenerative diseases to the exchange of data between computers and the brain, the implications are enormous, said Khizroev, who holds a secondary appointment in the Department of Biochemistry and Molecular Biology at the Miller School of Medicine.

“We will learn how to treat Parkinson’s, Alzheimer’s, and even depression. Not only could it revolutionize the field of neuroscience, but it could potentially change many other aspects of our health care system,” he said. “We will finally learn how the computing architecture of the brain works. And in turn, such knowledge will help enable neuromorphic computing in which computers mimic the way the brain works. We’ll be able to perform computations with specifications achieved today only with the most advanced supercomputers.”

Khizroev is developing his wireless brain interface project with Ping Liang, cofounder of Cellular Nanomed, an Irvine, California-based biotechnology company. Back in 2010, the two scientists helped pioneer medical applications of MENPs.

Federal entities like the National Science Foundation have taken a keen interest in Khizroev’s research, funding the scientist to take on projects that exploit his MEN technology. In the most recent initiative, the Defense Advanced Research Projects Agency has funded the College of Engineering professor to develop a wireless brain-computer interface that would enable fast, effective, and intuitive hands-free interaction with military systems by able-bodied service members. The so-called BrainSTORMS project is now in its second phase and is expected to be completed in 2024.

“Right now, we’re just scratching the surface,” Khizroev said of his group’s groundbreaking work, which, in addition to computer and electrical engineers, involves neuroscientists, physicists, chemists, materials scientists, and biologists. His team of graduate and undergraduate students—Elric Zhang, Brayan Navarrete, Mostafa Abdel-Mottaleb, Manuel Campos-Alberteris, Yagmur Akin Yildirim, and Isadora Takako Smith—are playing instrumental roles on the project, he said.

Said Khizroev, “We can only imagine how our everyday life will change with such technology.”

—————————————————————————————————————————————————————————

‘This is not science fiction,’ say scientists pushing for ‘neuro-rights’

By Avi Asher-Schapiro

Posted on https://www.reuters.com

on December 3, 2020

(Thomson Reuters Foundation) – Scientific advances from deep brain stimulation to wearable scanners are making manipulation of the human mind increasingly possible, creating a need for laws and protections to regulate use of the new tools, top neurologists said on Thursday.

A set of “neuro-rights” should be added to the Universal Declaration of Human Rights adopted by the United Nations, said Rafael Yuste, a neuroscience professor at New York’s Columbia University and organizer of the Morningside Group of scientists and ethicists proposing such standards.

Five rights would guard the brain against abuse from new technologies – rights to identity, free will and mental privacy along with the right of equal access to brain augmentation advances and protection from algorithmic bias, the group says.

“If you can record and change neurons, you can in principle read and write the minds of people,” Yuste said during an online panel at the Web Summit, a global tech conference.

“This is not science fiction. We are doing this in lab animals successfully.”

Neurotechnology has the potential to alter the mechanisms that make people human, so putting it in a “human rights framework” is appropriate, he added.

The U.N.’s declaration, which laid the groundwork for international human rights, was adopted after World War II in 1948.

A need for neuro-rights will grow as the developments become more popular and commercialized, the neurologists said.

Many of these technologies so far have applications in medicine, such as brain-computer interfaces helping patients move prosthetic limbs or communicate after a brain injury.

But those neurotechnologies increasingly will be available outside of the medical context, said John Krakauer, a professor of neurology and neuroscience at Johns Hopkins University in Maryland.

“Deep down what people want is consumer technologies,” he said.

The U.S. Food and Drug Administration has approved deep brain stimulation procedures – implanting electrodes in the brain – to treat a range of disorders from Parkinson’s disease to epilepsy.

Some private companies sell wearable devices to monitor brain activity that claim to be capable of tracking moods and emotions.

Krakauer compared the latest neurotechnologies to advances such as social media and mass advertising that can be utilized to alter people’s preferences without their expressed consent.

“What’s changed now is that the tech can get under the skull and get at our neurons,” he said.

Globally, a number of legal measures are aimed at these advances, including legislation in Chile that if passed would be the first law to establish neuro-rights for citizens.

In November, the Spanish government proposed new rules for regulating artificial intelligence that includes specific provisions for neuro-rights, Yuste said.

“This is the first time in history that humans can have access to the contents of people’s minds,” he said.

“We have to think very careful about how we are going to bring this into society.”

—————————————————————————————————————————————————————————

Genetically engineered ‘Magneto’ protein remotely controls brain and behaviour

By Mo Costandi

Posted on https://www.theguardian.com

on March 24, 2016

“Badass” new method uses a magnetized protein to activate brain cells rapidly, reversibly, and non-invasively.

Understanding how the brain generates behaviour is one of the ultimate goals of neuroscience – and one of its most difficult questions. In recent years, researchers have developed a number of methods that enable them to remotely control specified groups of neurons and to probe the workings of neuronal circuits.

The most powerful of these is a method called optogenetics, which enables researchers to switch populations of related neurons on or off on a millisecond-by-millisecond timescale with pulses of laser light. Another recently developed method, called chemogenetics, uses engineered proteins that are activated by designer drugs and can be targeted to specific cell types.

Although powerful, both of these methods have drawbacks. Optogenetics is invasive, requiring insertion of optical fibres that deliver the light pulses into the brain and, furthermore, the extent to which the light penetrates the dense brain tissue is severely limited. Chemogenetic approaches overcome both of these limitations, but typically induce biochemical reactions that take several seconds to activate nerve cells.

The new technique, developed in Ali Güler’s lab at the University of Virginia in Charlottesville, and described in an advance online publication in the journal Nature Neuroscience, is not only non-invasive, but can also activate neurons rapidly and reversibly.

Several earlier studies have shown that nerve cell proteins which are activated by heat and mechanical pressure can be genetically engineered so that they become sensitive to radio waves and magnetic fields, by attaching them to an iron-storing protein called ferritin, or to inorganic paramagnetic particles. These methods represent an important advance – they have, for example, already been used to regulate blood glucose levels in mice – but involve multiple components which have to be introduced separately.

The new technique builds on this earlier work, and is based on a protein called TRPV4, which is sensitive to both temperature and stretching forces. These stimuli open its central pore, allowing electrical current to flow through the cell membrane; this evokes nervous impulses that travel into the spinal cord and then up to the brain.

Güler and his colleagues reasoned that magnetic torque (or rotating) forces might activate TRPV4 by tugging open its central pore, and so they used genetic engineering to fuse the protein to the paramagnetic region of ferritin, together with short DNA sequences that signal cells to transport proteins to the nerve cell membrane and insert them into it.

When they introduced this genetic construct into human embryonic kidney cells growing in Petri dishes, the cells synthesized the ‘Magneto’ protein and inserted it into their membrane. Application of a magnetic field activated the engineered TRPV1 protein, as evidenced by transient increases in calcium ion concentration within the cells, which were detected with a fluorescence microscope.

Next, the researchers inserted the Magneto DNA sequence into the genome of a virus, together with the gene encoding green fluorescent protein, and regulatory DNA sequences that cause the construct to be expressed only in specified types of neurons. They then injected the virus into the brains of mice, targeting the entorhinal cortex, and dissected the animals’ brains to identify the cells that emitted green fluorescence. Using microelectrodes, they then showed that applying a magnetic field to the brain slices activated Magneto so that the cells produce nervous impulses.

To determine whether Magneto can be used to manipulate neuronal activity in live animals, they injected Magneto into zebrafish larvae, targeting neurons in the trunk and tail that normally control an escape response. They then placed the zebrafish larvae into a specially-built magnetised aquarium, and found that exposure to a magnetic field induced coiling manouvres similar to those that occur during the escape response. (This experiment involved a total of nine zebrafish larvae, and subsequent analyses revealed that each larva contained about 5 neurons expressing Magneto.)

In one final experiment, the researchers injected Magneto into the striatum of freely behaving mice, a deep brain structure containing dopamine-producing neurons that are involved in reward and motivation, and then placed the animals into an apparatus split into magnetised a non-magnetised sections. Mice expressing Magneto spent far more time in the magnetised areas than mice that did not, because activation of the protein caused the striatal neurons expressing it to release dopamine, so that the mice found being in those areas rewarding. This shows that Magneto can remotely control the firing of neurons deep within the brain, and also control complex behaviours.

Neuroscientist Steve Ramirez of Harvard University, who uses optogenetics to manipulate memories in the brains of mice, says the study is “badass”.

“Previous attempts [using magnets to control neuronal activity] needed multiple components for the system to work – injecting magnetic particles, injecting a virus that expresses a heat-sensitive channel, [or] head-fixing the animal so that a coil could induce changes in magnetism,” he explains. “The problem with having a multi-component system is that there’s so much room for each individual piece to break down.”

“This system is a single, elegant virus that can be injected anywhere in the brain, which makes it technically easier and less likely for moving bells and whistles to break down,” he adds, “and their behavioral equipment was cleverly designed to contain magnets where appropriate so that the animals could be freely moving around.”

‘Magnetogenetics’ is therefore an important addition to neuroscientists’ tool box, which will undoubtedly be developed further, and provide researchers with new ways of studying brain development and function.

Reference

Wheeler, M. A., et al. (2016). Genetically targeted magnetic control of the nervous system. Nat. Neurosci., DOI: 10.1038/nn.4265

—————————————————————————————————————————————————————————

Meet 10 Companies Working On Reading Your Thoughts (And Even Those Of Your Pets)

by Cathy Hackl

Posted on www.forbes.com

on June 21, 2020

Philosopher John Locke said, “I have always thought the actions of men the best interpreters of their thoughts.” Locke lived during the Age of Enlightenment. He probably wasn’t thinking about human machine actions during his philosophical ponderings. But what does it mean when machine actions are the result of human thoughts? No longer part of science fiction, many would argue that brain-machine and brain-computer interfaces are the next way we will communicate with machines and even with one another.

Brain-machine interfaces (BMI) and brain-computer interfaces (BCI) are devices that enable direct communication between a brain and an external device. BCIs let someone type onto a screen – without a keyboard. Brain-machine interfaces make it possible for amputees to move robotic limbs. BCIs can be as intricate as placing devices directly on the brain or via devices that communicate directly to machines without invasive surgery.

This type of technology opens a whole world of business applications. From dangerous jobs that already utilize robots to manufacturing, and even the consumer space. Brain-machine interfaces create a new way for humans to interact with technology, whether it be their smartphones, smart speakers, voice assistants, cars, and even each other. Startups and established companies alike realize the promise of brain-machine interfaces. They are racing to link humans to tech and machines, allowing humans to control digital technology using only their minds, which in turn opens up a whole new world of opportunities for businesses and brands to reach the customer of the future.

Here are 10 companies that are working on connecting our brains or actions to our machines and creating the future of input.

Neurable

Neurable’s mission is very exciting. Ramses Alcaide, founder of Neurable, got the idea for helping people with technology when he was a kid after his uncle lost his legs in a trucking accident. Alcaide said, “the idea of developing technology for people who are differently abled has been my big, never-ending quest.” Neurable launched onto the brain-computer interface scene in 2017 at SIGGRAPH with a proof of concept BCI game called “The Awakening.” Users put on a VR headset to escape from a room with only their minds. In December 2019, Neurable raised a $6 million Series A round to develop an everyday consumer based brain-computer interface in the form of headphones.

Alcaide sees neurotechnology built into a pair of headphones as the first step towards a BCI for consumers. Think about stopping, starting, or skipping songs with your mind without ever touching your phone. Interacting with smart devices with just our thoughts through a headphone-like device is impressive enough on its own. For Neurable, it’s the data behind the interactions that show the real value of BCIs.

Cognitive analytics are, “measures of different mental states, especially those aligned with performance.” BCI enabled headphones could help a person, “enter their desired emotion and then have a customized playlist generated to provoke that response.” Not to mention open a whole new world of metrics for marketers, training, health professionals, and a variety of other industries. Alcaide believes computing is going to become more spatial. He said, “As it continues to go down that path, we need forms of interaction that enable us to more seamlessly interact with our technology.”

MindX

MindX believes the next frontier in computing is a direct link from the brain to the digital world. They’re creating this link by “combining neurotechnology, augmented reality and artificial intelligence to create a “look-and-think” interface for next-generation spatial computing applications.” Part of spatial computing, is being able to interact with computers beyond a two-dimensional screen.

MindX uses smart glasses to create a link between human brains and technology. Julia Brown, MindX’s CEO, said smart glasses will let wearers access information with a single thought. Glasses connect to the mind from eye movements. Brain waves signal back what the wearer is thinking and where they are looking. BCI enabled smart glasses opens a world of opportunities for visual search. Think about your lost car keys and the smart glasses can locate them. Wonder what someone is wearing and get the brand and link to places to buy from the glasses – all with a thought.

NextMind

While some brain-computer interface companies focus on understanding the brain and cognitive metrics, others focus on real-time device control. NextMind, headquartered in Paris, France, uses a non-invasive BMI “that translates brain signals instantly from the user’s visual cortex into digital commands for any device in real-time.” NextMind debuted their device at CES 2020. Visitors to the booth demoed changing channels on a TV with just their thoughts.

Users wear NextMind on the back of their heads. It “creates a symbiotic connection with the digital world” by combining neural networks and neural signals. The Next Mind SDK is open to developers. They’re at a price point that the industry believes consumers are ready for the next phase in computer interaction.

Neurosity

Neurosity’s goal is to help developers get focused faster and stay focused longer. Notion (Neurosity’s thought-powered computer) has eight sensors as part of an EEG headset. In their demo, a woman scrolls through a recipe on her tablet while cooking. In another, a man changes the lighting in the room with his mind. The Notion brain sensor can be pre-ordered. The device touts its secure design saying, “it never stores your brainwaves.” Something to look out for in a BCI.

Neurosity launched dev kits in 2019. The Neurosity developer community is one of the signs that brain-computer interfaces have arrived. Developers can write apps for Notion’s brain sensor, which is developed to do two things: to detect human intent and to quantify the self. Think of it like wearing a fitness tracker for the brain. In April 2020, Neurosity temporarily cut the price of Notion pre-orders to $799 for developers. Neurosity pledges their support to “developers interested in helping quantify the human mind even further” by making their team available to brainstorm, code, and deploy neuro apps.

Kernel

Kernel is a neurotechnology company based in Los Angeles, California. Their aim is to create “a brain interface that develops real-world applications of high-resolution brain activity.” Kernal’s founder and CEO, Bryan Johnson, believes in a world where people are empowered by technology, not limited by it. He sees neuroscience, specifically Kernal’s “neuroscience as a service (NaaS)” as a way to get there. Kernel was featured in I Am Human, a 2020 award-winning documentary about the “the scientists and entrepreneurs on a quest to unlock the secrets of the brain.”

Kernel created two different experiments with their technology. One is Speller which allows participants to type with only their gaze and a visual keyboard. The other is Sound ID that can decode song IDs based on the brain signals from the listener. These experiments show that with just a helmet, brain scientists can run the same type of experiments as those in labs with room size equipment. With the use of a helmet, brain scientists can study thousands of more people than they can currently. Johnson believes this can help people who have suffered strokes and are unable to speak or those dealing with mental disorders.

Nectome

There are so many applications when it comes to brain-machine interfaces and neuroscience. Nectome’s technology is developed to preserve human memory by studying how the brain physically creates memories. Nectome isn’t just creating a BMI for the present. They’re hoping to change how people “preserve the languages, cultures, and wisdom of the past, and how health care engages with individuals’ memories and personal narratives.”

President of Y Combinator, Sam Altman, is “one of 25 people who have put down a $10,000 refundable deposit to join a waiting list at Nectome.” The only catch is, Nectome needs a living brain to capture the memories. The procedure kills the patient. “Nectome planned to test it with terminally ill volunteers in California, which permits doctor-assisted suicide for those patients.” Sam said of the procedure, “I assume my brain will be uploaded to the cloud.” The startup has faced some setbacks but seems to still be in operation.

Eventually, Nectome believes their biological preservation techniques will be like an episode from Amazon’s Upload TV series. At the end of their life, patients can choose to “upload” themselves into a digital afterlife.

CRTL-Labs

CRTL-Labs uses non-invasive neural interfaces to “expand human bandwidth”. CRTL-Labs recreates the “0s and 1s” of neurons by listening to muscle twitches. They send the signals into machine learning to decode a person’s intention. This network is fed back to the wearer to create a symbiotic relationship. Thomas Reardon, CEO of CRTL-Labs said, “AI and Machine Learning can be dominated by us.” CRTL-Labs does all of this with a wristband.

Facebook’s leadership have talked about a new type of interface “that includes work around direct brain interfaces that are going to, eventually, one day, let you communicate using only your mind.” In September 2019, they bought CRTL-Labs and have said the following about the acquisition, “‘The goal is to eventually make it so that you can think something and control something in virtual or augmented reality.’”

Neuralink

Neuralink is a company owned by none other than Elon Musk. The man who made electric cars cool (Tesla) and sends astronauts to space is his own spacecraft (SpaceX) also wants to connect humans to machines. Neuralink takes a slightly different approach to brain-machine interfaces by placing “threads” into the brain. Elon Musk “wants his brain implants to stop humans being outpaced by artificial intelligence.”

Neuralink threads are connected to a 4mm chip called the N1. The chips are “placed close to important parts of the brain and are able to detect messages as they are relayed between neurons, recording each impulse and stimulating their own.” The chip connects to a wireless device worn over the ear which is Bluetooth enabled. Currently, the chip is placed via traditional brain surgery but Musk envisions the chip will be inserted virtually painlessly in the future. Applications for the Neuralink are endless – from treating neurological disorders to replacing language, and eventually turning humans into cyborgs.

Paradromics

Paradromics developed brain-computer interface technology to help those disconnected from the world by mental illness, paralysis, or other types of brain disorders. Paradromics believes they can meet medical challenges with technical solutions. That is, a high-data rate brain-computer interface. Similar to the Neuralink, Paradromics places electrode arrays on the brain. They do this with a “computer chip that plugs into a part of the brain called the cortex.” With Paradromics technology, mental disorders and injuries no longer have to be debilitating. They can connect those affected back to the world.

Zoolingua

Brain-computer interfaces aren’t just for people. Zoolingua, owned by Con Slobodchikoff, wants people to understand dogs. Their device will allow both dog and human to communicate in both directions. The translating dog collar from the movie UP is coming to real life. Zoolingua bases their technology on research. “Observing (through video) dog vocalizations and behavior in specific contexts; classifying the complex forms of communication that occur; and working with computer programs to effectively and accurately decode and translate into US-English.”

According to an Amazon report, “advances in AI and machine learning will enable companies to make devices that can accurately translate a cat’s meows and a dog’s barks into English.” William Higham, co-author of the report, “believes devices that can talk dog could be less than 10 years away.”

Separate from Zoolingua, another example of someone working on decoding Fido’s thoughts is Dr. Gregory Berns from Emory University. He is a neuroscientist who’s also interested in what dogs think. Dr. Berns developed a “go/no-go” test to scan dog brains in M.R.I machines. The results show “dogs use corresponding parts of their brain to solve similar tasks as people do.” This isn’t something seen in non-primates before.

What Comes Next

Neuroscience technology is a quickly developing field. It’s one with endless applications for understanding the brain, unlocking human potential, and preserving today’s minds for the future. Some of the companies listed above are working towards specific use cases. Some use direct-brain sensors while others use non-invasive devices.

What each brain-machine interface company has in common is that they see the world as a connected place. It’s going to become even more so. The future of computing is beyond two-dimensional interactions. It’s more than voice, facial recognition, artificial intelligence, and augmented reality.

It’s all these things coming together under the power of the human brain.

—————————————————————————————————————————————————————————

The Pentagon’s Push to Program Soldiers’ Brains

by Michael Joseph Gross

Posted on https://www.theatlantic.com

in November 2018 ISSUE

The military wants future super-soldiers to control robots with their thoughts.

I. Who Could Object?

“Tonight I would like to share with you an idea that I am extremely passionate about,” the young man said. His long black hair was swept back like a rock star’s, or a gangster’s. “Think about this,” he continued. “Throughout all human history, the way that we have expressed our intent, the way we have expressed our goals, the way we have expressed our desires, has been limited by our bodies.” When he inhaled, his rib cage expanded and filled out the fabric of his shirt. Gesturing toward his body, he said, “We are born into this world with this. Whatever nature or luck has given us.”

His speech then took a turn: “Now, we’ve had a lot of interesting tools over the years, but fundamentally the way that we work with those tools is through our bodies.” Then a further turn: “Here’s a situation that I know all of you know very well—your frustration with your smartphones, right? This is another tool, right? And we are still communicating with these tools through our bodies.”

And then it made a leap: “I would claim to you that these tools are not so smart. And maybe one of the reasons why they’re not so smart is because they’re not connected to our brains. Maybe if we could hook those devices into our brains, they could have some idea of what our goals are, what our intent is, and what our frustration is.”

So began “Beyond Bionics,” a talk by Justin C. Sanchez, then an associate professor of biomedical engineering and neuroscience at the University of Miami, and a faculty member of the Miami Project to Cure Paralysis. He was speaking at a tedx conference in Florida in 2012. What lies beyond bionics? Sanchez described his work as trying to “understand the neural code,” which would involve putting “very fine microwire electrodes”—the diameter of a human hair—“into the brain.” When we do that, he said, we would be able to “listen in to the music of the brain” and “listen in to what somebody’s motor intent might be” and get a glimpse of “your goals and your rewards” and then “start to understand how the brain encodes behavior.”

He explained, “With all of this knowledge, what we’re trying to do is build new medical devices, new implantable chips for the body that can be encoded or programmed with all of these different aspects. Now, you may be wondering, what are we going to do with those chips? Well, the first recipients of these kinds of technologies will be the paralyzed. It would make me so happy by the end of my career if I could help get somebody out of their wheelchair.”

Sanchez went on, “The people that we are trying to help should never be imprisoned by their bodies. And today we can design technologies that can help liberate them from that. I’m truly inspired by that. It drives me every day when I wake up and get out of bed. Thank you so much.” He blew a kiss to the audience.

The mission is to make human beings something other than what we are, with powers beyond the ones we’re born with.

A year later, Justin Sanchez went to work for the Defense Advanced Research Projects Agency, the Pentagon’s R&D department. At darpa, he now oversees all research on the healing and enhancement of the human mind and body. And his ambition involves more than helping get disabled people out of their wheelchair—much more.

darpa has dreamed for decades of merging human beings and machines. Some years ago, when the prospect of mind-controlled weapons became a public-relations liability for the agency, officials resorted to characteristic ingenuity. They recast the stated purpose of their neurotechnology research to focus ostensibly on the narrow goal of healing injury and curing illness. The work wasn’t about weaponry or warfare, agency officials claimed. It was about therapy and health care. Who could object? But even if this claim were true, such changes would have extensive ethical, social, and metaphysical implications. Within decades, neurotechnology could cause social disruption on a scale that would make smartphones and the internet look like gentle ripples on the pond of history.

Most unsettling, neurotechnology confounds age-old answers to this question: What is a human being?

II. High Risk, High Reward

In his 1958 State of the Union address, President Dwight Eisenhower declared that the United States of America “must be forward-looking in our research and development to anticipate the unimagined weapons of the future.” A few weeks later, his administration created the Advanced Research Projects Agency, a bureaucratically independent body that reported to the secretary of defense. This move had been prompted by the Soviet launch of the Sputnik satellite. The agency’s original remit was to hasten America’s entry into space.

During the next few years, arpa’s mission grew to encompass research into “man-computer symbiosis” and a classified program of experiments in mind control that was code-named Project Pandora. There were bizarre efforts that involved trying to move objects at a distance by means of thought alone. In 1972, with an increment of candor, the word Defense was added to the name, and the agency became darpa. Pursuing its mission, darpa funded researchers who helped invent technologies that changed the nature of battle (stealth aircraft, drones) and shaped daily life for billions (voice-recognition technology, GPS devices). Its best-known creation is the internet.